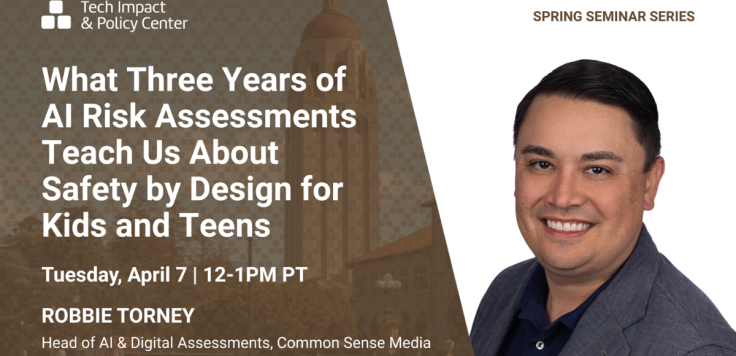

What Three Years of AI Risk Assessments Teach Us About Safety Design for Kids and Teens

Date

April 7, 2026 11:40 am – 1:00 pmLocation

Virtual

Hosted by Stanford Cyber Policy Center

Drawing on three years of risk assessments conducted by the nonprofit, Common Sense Media, across major AI platforms including ChatGPT, Gemini, Meta AI, Grok, and numerous AI companion services, this talk examines what they’ve learned about the gap between current AI design and kid and teen safety. This presentation will outline their approach for evaluating developmental appropriateness in AI systems. Through concrete examples from platform evaluations, they’ll explore patterns in safeguarding young users, including in providing “advice” on a range of topics, mental health topics, and more traditional challenges around age appropriate content. These findings reveal structural challenges in how AI products are currently conceived and deployed for young people, from design assumptions that ignore developmental differences to business models that prioritize engagement over safety.